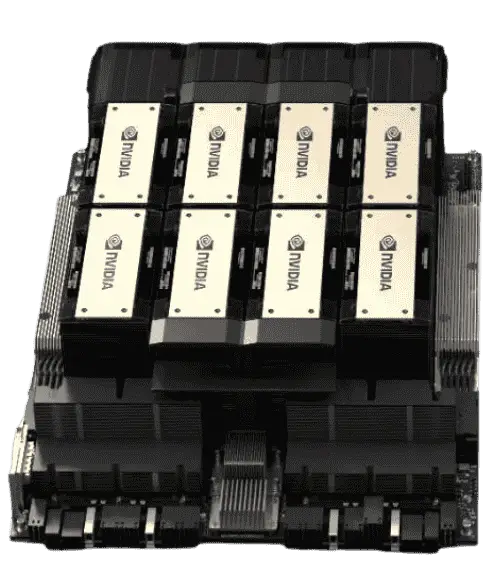

Significant price hikes on 5090, L40S and Enerperise Blackwell Series GPUs continues into Q1 2026. Please note Credit Card payments will only work if USD or AED currency is selected on top right corner of the website. For US customers; before placing an order for any crypto miners, inquire with a live chat sales rep or toll-free phone agent about any potential tariffs. HGX B200 lead times are now between 8-20 weeks for Golden Sku selections, with custom BOMs exceed 26 weeks. HGX H200 offerings in stock, as well as limited HGX B300. We are now certified partners of Supermicro in both NA and MENA regions.